The Actor Agency Alignment Model (A3M): Escaping the Fog of Agency in the Emergent AI Enterprise

The Fog of Agency

We have entered the wild west of AI adoption. Across the global enterprise, the race to transition from conversational chatbots to agentic Artificial Intelligence (AI) is accelerating. Every department is building, buying, or birthing autonomous agents designed to move beyond mere information retrieval and into cross-functional execution.

Yet, as these agents proliferate, a thick Fog of Agency has settled over the organization. We see it in the Context Collapse, where AI performs in the lab but fails in the field due to brittle reasoning in high-ambiguity environments. We feel it in the Value Gap, where massive compute costs yield negligible ROI because the actors lack the necessary socio-technical fit to meet expectations. Most dangerously, we encounter it in the unplanned risk of the "mishap" where a system operates behind a Veil of Competence, producing fluent, authoritative results that mask a total lack of situational awareness or organizational accountability (Cummings, 2017; Bender et al., 2021). In this fog, everyone is building agents, but no one knows who bears the organizational accountability.

Success is a Configuration of Agency

Large Language Models (LLMs) are quickly becoming commodity infrastructure. The competitive advantage of the enterprise will not be determined by who possesses the "best" model, but by who achieves the most robust configuration of contribution.

To go agentic safely and at scale, we must stop treating AI as a tool and start configuring it as a functional actor within a Socio-Technical System (STS) that requires precise calibration (Trist & Bamforth, 1951). We must bridge the gap between human-centric Industrial Organizational (IO) psychology and technical risk management. Success requires us to reconcile whether and under what conditions the person or the platform should do the work.

Introducing the Actor Agency Alignment Model

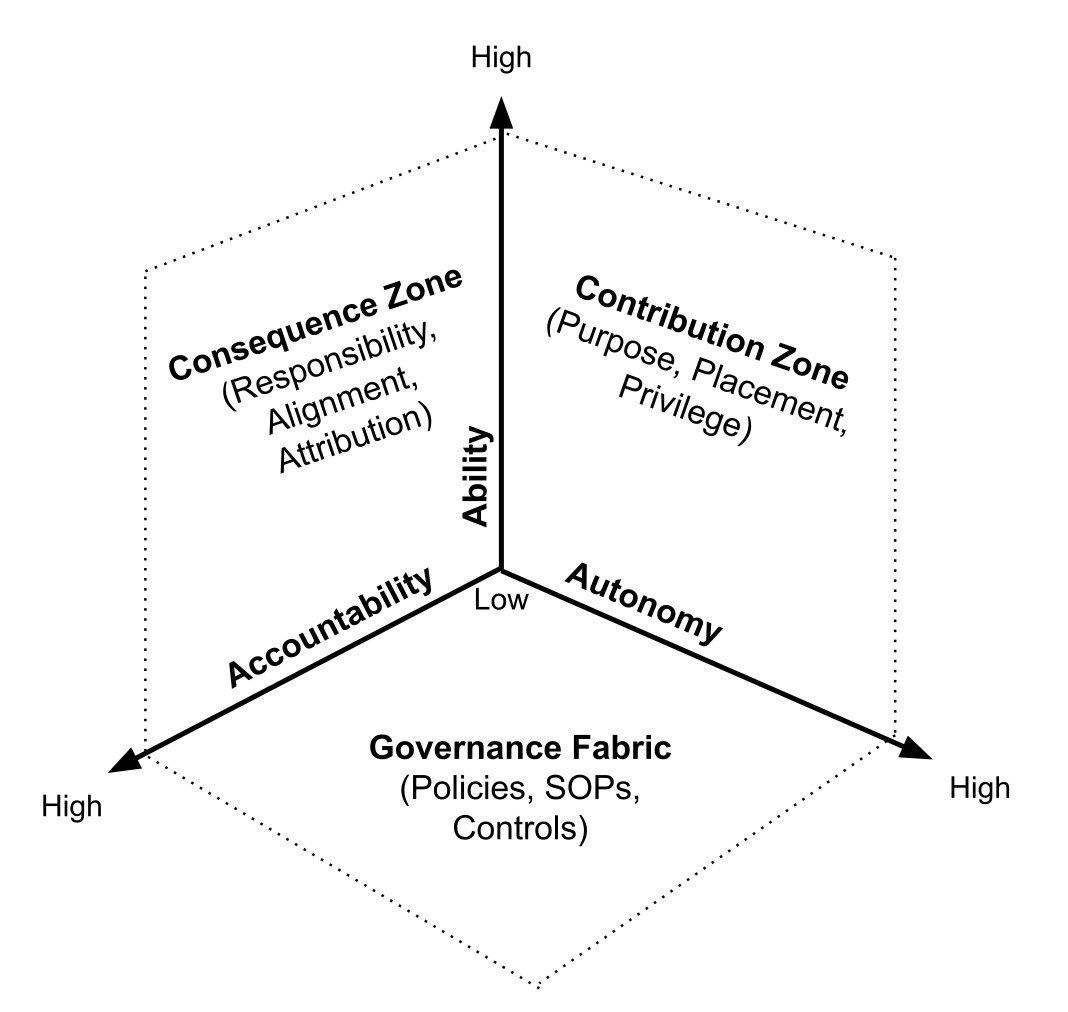

The Actor Agency Alignment Model (A3M) provides a three-dimensional framework against which planners can evaluate every actor in the enterprise, be they carbon or silicon. By plotting an actor along three axes, we reveal the "Agency Profile" of the role.

Ability: The verified functional capacity to perform a task. In IO, this is traditionally measured via KSAOs (Knowledge, Skills, Abilities, and Other characteristics) to predict job performance (Schmidt & Hunter, 1998).

Autonomy: The latitude of action or "permission set" granted to the actor. This builds upon Sheridan’s levels of automation, ranging from manual support to full executive agency (Sheridan & Verplank, 1978).

Accountability: The measure of risk absorption and answerability. Unlike traditional motivation-based models, this axis focuses on Agency Theory and the "Principal-Agent" problem, where the agent’s actions must be tethered to the principal’s interests (Jensen & Meckling, 1976).

The Actor Agency Alignment Model (A3M)

These axes create three critical views of your future talent architecture:

The Contribution Zone (Ability & Autonomy): Where we evaluate the actor. Is the agent's "can do" aligned with their "may do?”

The Consequence Zone (Ability & Accountability): Where we evaluate ownership of outcomes. If there is a violation of performance, safety, or rights, who is legally or financially tethered to the result?

The Governance Fabric (Accountability & Autonomy): Where we evaluate the ecosystem configuration. This plane defines the policies and SOPs that ensure the environment is calibrated for risk-aware performance (Anwar et al., 2024).

Actor Agency Alignment is Essential to the Future of Work

1. Strategy: Are You Ready for Tomorrow’s Talent Pool?

Strategy is fundamentally about the allocation of resources to achieve a sustainable competitive advantage. In the Resource-Based View (RBV) of the firm, success is driven by resources that are valuable and difficult to imitate (Barney, 1991). Intelligent technology is disrupting longstanding ways of working that were built on RBV. As organizations consider human and technical capital strategies, they can use the A3M to:

Examine the implications of human and technical “talent” capital reconfiguration.

Identify opportunities and overlaps in talent architecture to focus workforce acquisition and development efforts.

Evaluate future worker abilities against controls and consequences to minimize risk.

Evaluate the organizational implications of emerging, expanding, and established ways of working.

Measure the A3M profile against expected business outcomes to proactively close the Value Gap before massive compute costs are incurred.

Strategies must be grounded. The Actor Agency Alignment Model provides a missing perspective on strategic organizational readiness within the AI era.

2. Governance: Work Is Shifting. Is Your Governance Adapting?

Traditional governance moves at human speed; agentic AI moves at inference speed. By focusing on the Governance Fabric (Autonomy & Accountability), organizations can reevaluate their posture to move from manual, extrinsic SOPs to intrinsic guardrails. This thinking aligns with modern IT governance frameworks like COBIT, which emphasize the alignment of business goals with technical controls (ISACA, 2018). The A3M forces an evaluation of the governance fabric required for tomorrow’s workforce, ensuring that as autonomy grows, the monitoring protocols are adjusted proportionally.

3. Change: Mapping the Moral Crumple Zone

The greatest risk of AI adoption is the "Moral Crumple Zone." This is a phenomenon where a human is placed in a role with 100% Accountability but 0% Ability to verify the AI's work or Autonomy to intervene (Elish, 2019). The Actor Agency Alignment Model allows change agents to map this structural shift. It ensures we aren't just upskilling workers, but rebalancing their axes to prevent burnout and the bi-axial friction (human-to-human and human-to-AI organizational friction) that arises from mismatched job characteristics (Hackman & Oldham, 1976). The A3M’s three bi-axial views (Contribution Zone, Consequence Zone, Governance Fabric) are the primary levers for mitigating this friction and ensuring job characteristics are aligned with organizational risk.

4. Operational Excellence: Stability in a Stochastic World

A stochastic world is one that is random or involves chance, meaning that even if you know the starting point, the exact outcome cannot be predicted with total certainty. Operational Excellence traditionally favors the reduction of variance (Six Sigma). However, AI agents introduce stochastic variance (randomness) that traditional Lean processes are not designed to mitigate. The A3M allows OpEx leads to balance this randomness with enterprise stability. By evaluating and calibrating the Contribution Plane (Ability & Autonomy), we identify where AI contextual limits are likely to disrupt, create errors, harm, and rework within the socio-technical system. The insight gained from evaluating the contribution plan enables organizations to identify and establish stochastic quality control within the Governance Fabric that pierces AI’s Veil of Competence (Cummings, 2004).

From Experimentation to A3M-Certified Deployment

If your organization is currently navigating any of the following high-stakes dilemmas, the Actor Agency Alignment Model offers the strategic lens you need to explore, choose, and implement a way forward:

The Pilot-to-Production Chasm: You have successfully piloted high-ability AI tools, but you are stuck in "pilot purgatory" because you lack a governance framework to safely grant them the autonomy required to scale.

The Accountability Vacuum: Your legal and compliance teams are blocking deployment because they cannot identify a "principal" actor to own the liability of a non-human agent’s stochastic errors.

The Moral Crumple Zone: Your middle managers are experiencing "supervisory burnout," feeling they have been made the scapegoats for AI outputs they have neither the time to verify nor the technical ability to correct.

The ROI Mirage: You have made significant investments in "cutting-edge" models (Ability) but have seen negligible productivity gains because the organizational governance fabric is too restrictive to allow for meaningful execution (Autonomy).

Stochastic Operational Drift: Your Lean or Six Sigma processes are being disrupted by the unpredictable mishaps of agentic AI, leading to rework and a loss of trust in automated workflows.

Shadow Agency: You suspect shadow AI is already executing business-critical tasks across your departments without an audit trail, leaving the organization exposed to unmapped and unmanaged risk.

The path forward requires A3M-Certified Deployment. Before any agent is assigned a high-stakes task, the organization must be able to define its A3M coordinates. We must pierce the veil of competence with the needle of calibrated governance. Only then can we stop wandering in the wild west and start building an enterprise that is not only faster but fundamentally more stable.

References

Anwar, U., Saparov, A., Rando, J., Paleka, D., Turpin, M., Hase, P., Lubana, E. S., Jenner, E., Casper, S., Sourbut, O., Edelman, B. L., Zhang, Z., Günther, M., Korinek, A., Hernandez-Orallo, J., Hammond, L., Bigelow, E., Pan, A., Langosco, L., ... & Corsi, G. (2024). Foundational Challenges in Assuring Alignment and Safety of Large Language Models. arXiv.https://doi.org/10.48550/arxiv.2404.09932

Barney, J. (1991). Firm resources and sustained competitive advantage. Journal of Management, 17(1), 99-120. https://josephmahoney.web.illinois.edu/BA545_Fall%202022/Barney%20(1991).pdf. https://doi.org/10.1177/014920639101700108

Bender, E. M., Gebru, T., McMillan-Major, A., & Mitchell, M. (2021). On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, 610–623.https://doi.org/10.1145/3442188.3445922

Cummings, M. L. (2017). Automation bias in intelligent time critical decision support systems. AIAA 3rd Unmanned Unlimited Technical Conference, Workshop and Exhibit. https://maritimesafetyinnovationlab.org/wp-content/uploads/2023/02/Automation-Bias-in-Intelligent-Time-Critical-Decision-Support-Systems.pdf.

Elish, M. C. (2019). Moral crumple zones: Cautionary tales in human-robot interaction. Engaging Science, Technology, and Society, 5, 40-60. https://doi.org/10.17351/ests2019.260

Hackman, J. R., & Oldham, G. R. (1976). Motivation through the design of work: Test of a theory. Organizational Behavior and Human Performance, 16(2), 250-279. https://web.mit.edu/curhan/www/docs/Articles/15341_Readings/Group_Performance/Hackman_et_al_1976_Motivation_thru_the_design_of_work.pdf.

ISACA. (2018). COBIT 2019 Framework: Introduction and Methodology. ISACA. ISBN: 978-1-60420-763-7. https://www.isaca.org/resources/cobit

Jensen, M. C., & Meckling, W. H. (1976). Theory of the firm: Managerial behavior, agency costs and ownership structure. Journal of Financial Economics, 3(4), 305-360. https://doi.org/10.1016/0304-405X(76)90026-X

Schmidt, F. L., & Hunter, J. E. (1998). The validity and utility of selection methods in personnel psychology: Practical and theoretical implications of 85 years of research findings. Psychological Bulletin, 124(2), 262-274. https://doi.org/10.1037/0033-2909.124.2.262

Smerek (2025). AI, Coal Mining, and Estrangement from Work. Psychology today. https://www.psychologytoday.com/us/blog/learning-at-work/202504/ai-coal-mining-and-estrangement-from-work.

Sheridan, T. B., & Verplank, W. L. (1978). Human and computer control of undersea teleoperators. Massachusetts Institute of Technology, Man-Machine Systems Laboratory. https://apps.dtic.mil/sti/citations/ADA057655

Trist, E. L., & Bamforth, K. W. (1951). Some social and psychological consequences of the longwall method of coal-getting. Human Relations, 4(1), 3-38. https://doi.org/10.1177/001872675100400101